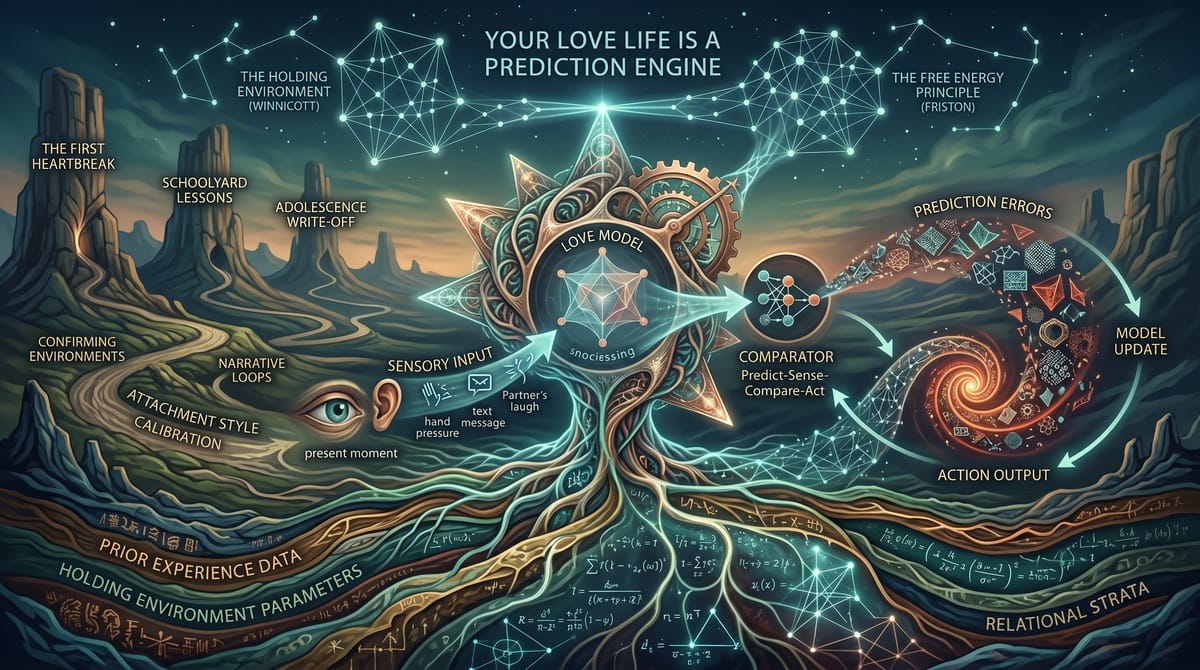

Your Love Life Is a Prediction Engine

You don’t fall in love. You predict it.

That sounds cold. It’s not. It’s the warmest thing your nervous system does. Every moment you spend with another person, your brain is running a quiet, constant operation: predicting what they’ll do next, predicting what it means, predicting whether you’re safe. When those predictions land; when the person across the table laughs where you expected them to laugh, reaches for your hand at the moment you needed them to, says the thing that tells you they get it; something settles in your chest. Not excitement. Something deeper. The particular calm of a system whose model of the world just got confirmed.

That’s what love feels like from the inside. Not a feeling that arrives. A prediction that lands.

A note before we go further, because the framework we’re about to walk through is seductive in the way that good frameworks always are: it makes things feel solved. It isn’t a solution. It’s a lens. Donald Winnicott, the pediatrician and psychoanalyst who will show up throughout this book because he was right about nearly everything that matters, had a concept called the transitional object; the stuffed bear the toddler carries everywhere, the blanket that is simultaneously real and symbolic, that the child holds and uses and eventually outgrows without anyone announcing that the bear was never actually magic. This book is a transitional object. Hold it. Use it. Try the framework on. And remember that the map is never the territory; the map is a thing you carry while you learn to navigate the territory yourself. The moment the framework starts feeling like the final word rather than a useful lens, set it down for a minute. Pick it up again when it’s useful. That’s how good tools work.

The Machine You Didn’t Know You Were Running

The framework behind this is called active inference. It comes from computational neuroscience, and the short version is this: your brain is not a stimulus-response machine. It’s a prediction engine. It doesn’t sit around waiting for things to happen and then react. It generates a constant stream of predictions about what’s going to happen next, then checks those predictions against what actually shows up through your senses.

Four operations, running all the time, mostly below awareness.

Predict. Your brain generates an expectation. Not a conscious thought; a neural model. A configuration of prior experience that says: based on everything I’ve learned, here’s what should happen next.

Sense. The world delivers actual data. What your partner’s face is doing. What their voice sounds like. What they said, or didn’t say. The pressure of their hand. The silence after you asked a question.

Compare. The prediction meets the sensory evidence. If they match, the system barely registers it. Confirmation is quiet. If they don’t match, the system generates what’s called a prediction error; the gap between what you expected and what you got. Prediction error is loud. Your nervous system treats it as news.

Act. Based on the comparison, the system does one of two things. It updates the model: okay, I predicted wrong, let me adjust my expectations. Or it acts on the world to make the world match the prediction: this doesn’t match what I expected, so I’ll do something to fix it. Move toward. Pull away. Ask the question. Avoid the question. Send the text. Don’t send the text.

That’s it. Predict, sense, compare, act. Every moment of every relationship you’ve ever been in, that loop was running. The fights, the make-ups, the slow erosion of trust, the sudden flood of intimacy, the morning you woke up and knew it was over; all of it was your prediction engine doing its job. Generating models, testing them against a person who was doing the same thing back at you.

Karl Friston, the neuroscientist who formalized this framework, calls it the free energy principle: all self-organizing systems, from single cells to human brains, work to minimize the gap between their predictions and their sensory experience. They minimize surprise. Not the fun kind of surprise, the birthday party kind. The thermodynamic kind. The kind that, at the biological level, means your model of the world is wrong, and being wrong costs energy, and costing energy is a threat to survival.

Your brain hates being wrong about the same thing the way your body hates being cold. Both are expensive. Both trigger a response. And in relationships, both feel like something is very, very off.

What a Good First Date Actually Is

You’ve had the experience. You sit down across from someone and within twenty minutes something clicks. The conversation flows. The timing is right. You finish each other’s thoughts, or better, you surprise each other in ways that feel good rather than jarring. You leave thinking: chemistry.

Here’s what actually happened.

Your predictive models were aligned. Not identical; aligned. You both arrived with models of how a conversation should go, how attraction gets expressed, what humor sounds like, what intimacy feels like at a table in a restaurant with two drinks and nowhere to be. And those models were close enough that the prediction errors between you were small and pleasant. Every time they said something slightly unexpected, but in a direction your system could integrate, your brain registered it as novelty without threat. The error was small enough to be interesting and large enough to be alive.

Lisa Feldman Barrett, whose work on constructed emotion rewrites most of what people think they know about feelings, puts it this way: emotions aren’t reactions to the world. They’re your brain’s predictions about what the world means, made real in your body before you’re aware of them. The flutter in your chest on that first date wasn’t a reaction to the other person. It was your prediction engine generating a model; this person is safe, this is going well, this might be something; and constructing the corresponding bodily state to match. You felt attracted because your brain predicted attraction and built the feeling to go with it.

The date where nothing clicks? Same mechanism, opposite outcome. The prediction errors were too large, too frequent, or in the wrong direction. They laughed at something that wasn’t funny to you. They said something your model couldn’t place. The timing was off; not catastrophically, just enough that your system kept generating small errors it couldn’t resolve. You left thinking nice person, no spark. Translation: your prediction engines weren’t calibrated to each other, and the cost of running that mismatch for two hours was enough that your system voted no.

None of this is conscious. None of it is a choice. It’s two prediction engines meeting, testing their models against each other, and arriving at a verdict in the body before the mind gets a vote.

The Fight That Came Out of Nowhere

It didn’t come out of nowhere.

You know the one. Tuesday evening, nothing special, and suddenly you’re in a full-blown argument about dishes, or the way they said “fine” when you asked about dinner, or the text they sent your mother, or nothing identifiable at all. Just a wall of tension that materialized between you like weather.

In the prediction framework, that wall has a specific architecture. It’s a cascade of prediction errors that neither system could resolve fast enough.

Here’s how it builds. One system generates a prediction: they’ll ask about my day when I walk in. The prediction fails; they’re on their phone, they don’t look up. Error generated. The system now has two options: update the model (they’re busy, it’s fine, I’ll get their attention in a minute) or act on the world (say something, make them look up, test whether they care). If the system is well-calibrated, it updates. If it’s running hot; if it arrived home already carrying prediction errors from the workday, already in a slightly activated state; it acts. It says something. The tone is off because the system is generating behavior from a threat-state model, not a connection-state model.

Now the other system catches the tone. This generates a prediction error in their engine: that’s not how they usually talk to me. Their system has the same two options: update the model or act. If they’re well-calibrated, they might say, “You okay?”; a bid to gather more sensory data before the model spirals. If they’re activated too, they match the tone. Now both systems are generating prediction errors in each other, each error feeding the next, each response slightly off from what the other predicted, and the cascade accelerates.

By the time you’re arguing about the dishes, you’re not arguing about the dishes. You’re two prediction engines caught in a mutual error spiral, each one’s output becoming the other’s input, the errors compounding faster than either system can resolve them. The fight that came out of nowhere came out of prediction error. It always does.

Anil Seth, the neuroscientist who studies consciousness through the lens of prediction, describes the brain as a “prediction machine” that constructs experience rather than receiving it. Your experience of the fight; the anger, the hurt, the bewildering sense that the person across from you has become a stranger; that wasn’t you perceiving what was happening. That was your prediction engine constructing a reality in which the person you love is a threat, because the accumulation of errors had pushed your model past a threshold.

The fight was real. But the threat that fueled it, the sense that the person across from you had become someone dangerous, was in most cases a prediction your engine constructed from accumulated errors rather than from anything the current moment actually required.

The Deeper Training

Here’s where it gets personal.

Your prediction engine didn’t start running the day you met your partner. It’s been running since before you could talk. And it has been trained, continuously, by every relational environment you’ve moved through. Not damaged once. Not broken by one person. Shaped by a series of rational responses to real conditions, each one updating the model, each one the best available move given what the system knew at the time.

The first environment was the body of another person. Donald Winnicott, the pediatrician and psychoanalyst who spent decades studying what happens between mothers and infants (and who supervised John Bowlby’s psychoanalytic training; the two were parallel pioneers who occasionally clashed, Bowlby leaning into ethology and empiricism while Winnicott stayed in the consulting room, but their ideas cross-pollinated in ways most people in the attachment conversation have never heard about), called this the holding environment. The infant doesn’t have a functioning prediction engine yet. It has needs, and distress when those needs aren’t met, and no capacity to manage either one. The holding environment is someone else’s nervous system doing that work for you. The caregiver’s responsiveness is the baby’s first data about how the world operates. Does the world respond when I signal? Does it soothe? Does it show up?

Winnicott’s precision matters here. He didn’t say the baby needs a perfect mother. He said the baby needs a good-enough mother. A perfect mother would eliminate prediction error entirely; the baby would never experience the gap between expectation and reality. And this, Winnicott understood, would be a disaster. A system that never encounters prediction error never learns to handle it. A good-enough mother does something harder: she gets it right enough of the time that the gaps are survivable. The baby’s nascent prediction engine gets to practice: the world didn’t match my model, and I didn’t die. Update. Try again. This is where the initial parameters get set.

But the initial parameters are not the whole story. Not even close.

The prediction engine keeps training. The playground teaches it lessons the holding environment never covered. The kid who got excluded learns something about which social signals to amplify. The kid who got included learns something about which to trust. Both responses are rational given the data available, and both update the model in ways the child has no language to describe but will carry into every relationship that follows.

Adolescence is a full rewrite of the precision weights, and it happens at the worst possible time; when the social stakes feel existential and the prefrontal cortex is still under construction. The first person who breaks your heart doesn’t just hurt; they deliver a massive, specific prediction error that the system metabolizes into a new model of how love works. If the heartbreak was sudden, the model learns that connection can vanish without warning. If the heartbreak was slow, the model learns that erosion is invisible until it’s complete. If the heartbreak involved betrayal, the model learns that the signals you trusted most are the ones most likely to mislead. Every one of these updates is rational, and every one rewires the precision weights in ways that will shape who you find attractive, who you trust, and how long you stay in the room when things get hard, for years to come.

Then the story you tell yourself about why it happened. This is the part nobody talks about, and it may be the most consequential training data of all. The prediction engine doesn’t just process the event; it processes your narrative of the event. “He left because I was too much” trains a different model than “he left because he wasn’t ready.” Same event. Same sensory data. Radically different model update, depending on which story the system adopts. And the story feels like insight, feels like understanding, but it’s actually another layer of training data. The model updates on the narrative as though it were evidence.

Post-college, the configurations start to compound. You build a life around the model. You choose partners who confirm the predictions, not because you’re drawn to suffering but because the prediction engine gravitates toward environments where its existing model generates the fewest errors. The anxious person dates someone who runs hot and cold because that pattern generates errors the system already knows how to process. The avoidant person dates someone who doesn’t push because low-demand environments are where the model runs most efficiently. Each relationship provides confirming data. Each confirmation deepens the configuration. Each step is rational.

By the time you arrive in your current relationship, the prediction engine you’re running is the product of the holding environment, the playground, the first heartbreak, the story you told about it, the next relationship, the story you told about that, the years of accumulated evidence that your model is correct because the environments you chose kept proving it right. Not because you’re broken. Because you’re a system that has been doing exactly what systems do: updating on available evidence, minimizing prediction error, building the most efficient model it can.

Friston’s math and Winnicott’s clinical observation converge here. The free energy principle says systems minimize prediction error. Winnicott says the infant needs an environment where prediction error is present but manageable. The holding environment sets the initial parameters; the caregiver calibrates the baby’s system to assign the right amount of importance to the right signals. But the calibration continues for the rest of your life. Every relational environment you pass through is another round of training. The question isn’t “what did your mother do to you?” The question is “what did every environment, including the ones you chose, teach your prediction engine about how love works?”

The current configuration of your prediction engine isn’t a wound. It’s an accumulation. A series of rational trade-offs, each one adaptive in context, each one compounding into the system you’re running now. The problem isn’t that you were damaged. The problem is that the configuration that was rational in every previous environment may not be rational in this one. The environment changed. The configuration, running on accumulated evidence from environments that no longer exist, didn’t.

These aren’t personality traits. They’re not character flaws. They’re not vibes. They’re the specific configuration of a prediction engine trained across a lifetime of environments, with building materials gathered at every stop.

What This Means For You, Right Now

If you’re in a relationship that works and you don’t know why, it’s probably because your prediction engines are well-calibrated to each other. Your models overlap enough that the errors between you are small and manageable. When something goes wrong, both systems update rather than escalate. You live in each other’s predictive comfort zone; not because you’re boring, but because your systems are flexible enough to integrate each other’s surprises without treating them as threats.

If you’re in a relationship that keeps going sideways in the same pattern; the same fight, the same withdrawal, the same cycle of intensity and collapse; it’s probably because your prediction engines are generating systematic errors that neither of you can resolve. The models are miscalibrated to each other, or to reality, or both. And the miscalibration isn’t random. It’s the fingerprint of the holding environment that trained each system. You’re not “bad at relationships.” You’re running a prediction engine that was trained on specific data, and it’s doing exactly what it was trained to do.

If you’ve ever been told you “have issues” with trust, or intimacy, or communication, what you actually have is a prediction engine with a specific configuration. A specific set of priors about how relationships work, which signals to amplify, which to mute, when to approach, when to avoid. That configuration was rational at every step. Not installed by one person in one environment; accumulated across every relational environment you’ve passed through, each update the best available response to the conditions at the time. The problem isn’t that you’re broken. The problem is that the accumulated configuration, rational in every previous context, may not be rational in this one.

Your attachment style isn’t who you are. It’s how your prediction engine was trained.

And prediction engines can be retrained. Not easily. Not quickly. Not by reading a single book or having a single insight or dating someone your therapist approves of. But the architecture is plastic. The precision weights can shift. The model can update. You can learn, slowly and with the right conditions, to run different predictions.

The rest of this book is about how.